If you’re reading this, chances are you’re part of an FTC robotics team and trying to figure out Vuforia. It looks crazy intimidating, but it really isn’t that difficult to get setup and running. Once its going, you can play around with programming it to recognize other elements of the game field to aid in autonomous navigation.

Below is a quick tutorial I put together as I worked through the entire process. Follow this step by step and you’ll be able to get the bot’s camera to recognize elements of the field.

To implement Vuforia, you’ll need to create an account and generate a license key. This is free to do, and quite easy.

Register and obtain a license key

- Register for a Vuforia developer account here – https://developer.vuforia.com/user/register

- After you’ve completed the registration, check your email and click on the link to validate your account

- Log into the Dev Portal after its validated

- From there, click the Develop tab which will take you to the license manager screen. If that doesn’t work for some reason you can just click this link

https://developer.vuforia.com/targetmanager/licenseManager/licenseListing

https://developer.vuforia.com/targetmanager/licenseManager/licenseListing - Create your Vuforia license key

- Click – Add License Key

- Choose Development

- Name it (use your team name or bot name)

- Device Type : Mobile

- License Key : Develop – no charge

- Click Next

- check box to confirm the agreement, feel free to read it first if you have time 🙂

- click Confirm

- Click – Add License Key

- Keep this browser window opened, we’ll need it in about 5 minutes.

Lets Get To Work!

Now that you have your key copied we’ll work in Android Studio and create your new class.

Create a new class for your project

-

- open Android Studio

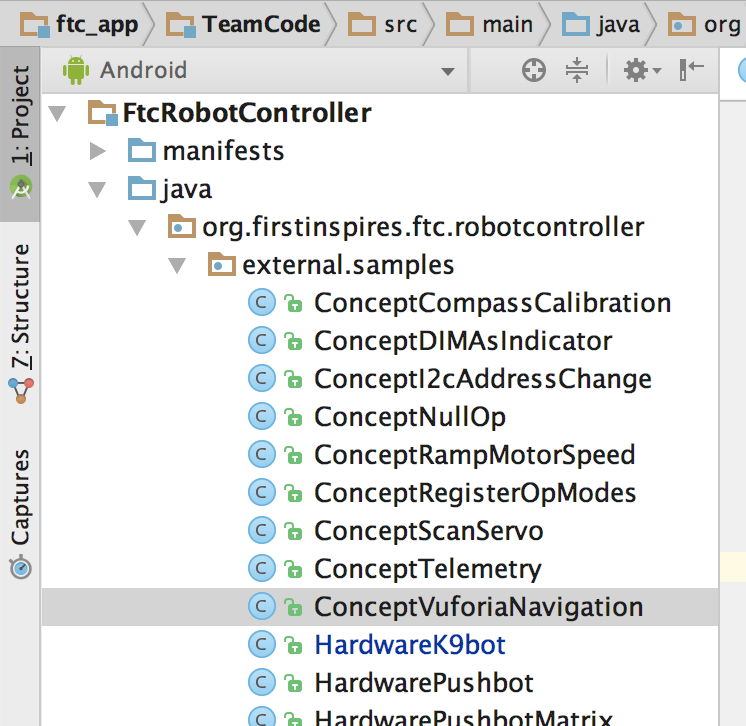

- Navigate to FtcRobotController > java > org.firstinspires.ftc.robotcontroller > external.samples

- find the ConceptVuforiaNavigation class

- right click on it copy the class

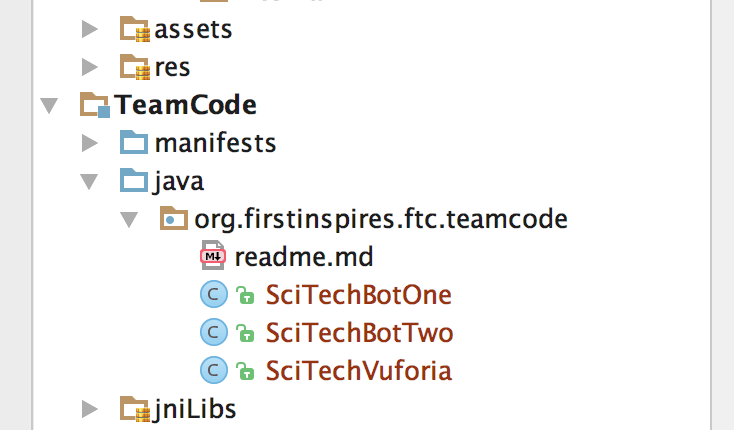

- Navigate to TeamCode > java > org.firstinspires.ftc.teamcode (you can see two of our other opcodes listed below, you won’t have those.

- Right click on org.firstinspires.ftc.teamcode

- Choose Paste to create a new class here

- A dialog box will pop up asking you to give your new class a name ( I chose SciTechVuforia )

- If the class didn’t open when it pasted, double click on it to open it.

Enable the opcode

- The new SDK allows you to easily include opcodes from the actual class itself, instead of having to register it in a separate file like last year. To enable your new class, scroll down find and comment out or remove the @Disabled line by either deleting it or adding // in front

for example comment it out like this : //@Disabled - You can also set the name and group for your new opcode with the following line

@Autonomous(name=“Concept: Vuforia Navigation”, group =“Concept”)

We’ll just leave this as is, so on our Driver Station, when we get there shorty, you’ll see “Concept: Vuforia Navigation” listed.

- Now lets add your Vuforia License Key

Scroll down a bit and find the line that reads:

parameters.vuforiaLicenseKey = “”  Copy your license key

Copy your license key

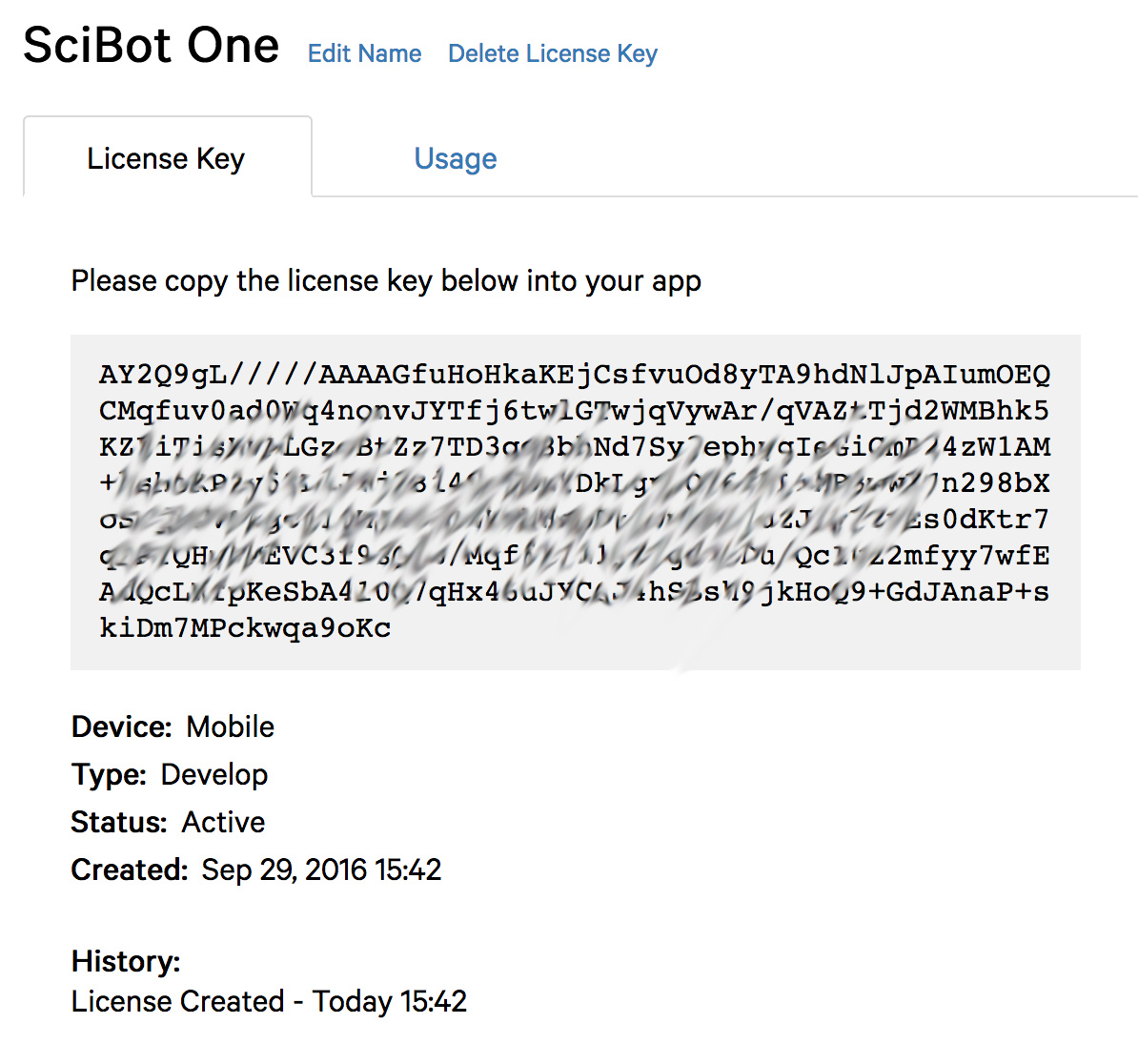

- Flip back to your browser where you have Viewforia opened on the License Manager Screen. If its not there, log in and click the Develop tab.

- click the name of the key you created and it will display a screen like the image on the right (I’ve smudged out mine for security reasons)

- select and copy the entire key

- Flip back to your browser where you have Viewforia opened on the License Manager Screen. If its not there, log in and click the Develop tab.

- Flip back to Android Studio

- Paste your key between the quotes leave the default concept code as is, this will use the back facing camera (NOT the selfie camera)save your changes

That’s all the code and setup you need to do.

Lets Test your set up

- plug in your robot controller phone to the computer

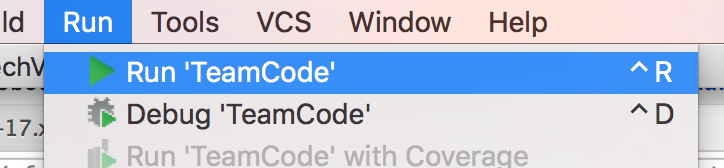

- From the Android Sudio menu bar choose Run > Run ‘Team Code’ and compile the app to your phone

- When the build is successful, and like normal you see the app open on the phone, disconnect it and hook it up on our bot

- On the driver station phone :

- choose autonomous

- pick Concept: Vuforia Navigation (or if you named it something different above)

- tap init

- a rectangle for the camera image will display, this is a good thing

- tap start

- a camera image should display on the robot controller screen, using the back camera (not the “Selfie” one)

- telemetry data should appear on the driver station phone

- If this is working, Congratulations, your code is all good, no typos.

Test the image recognition

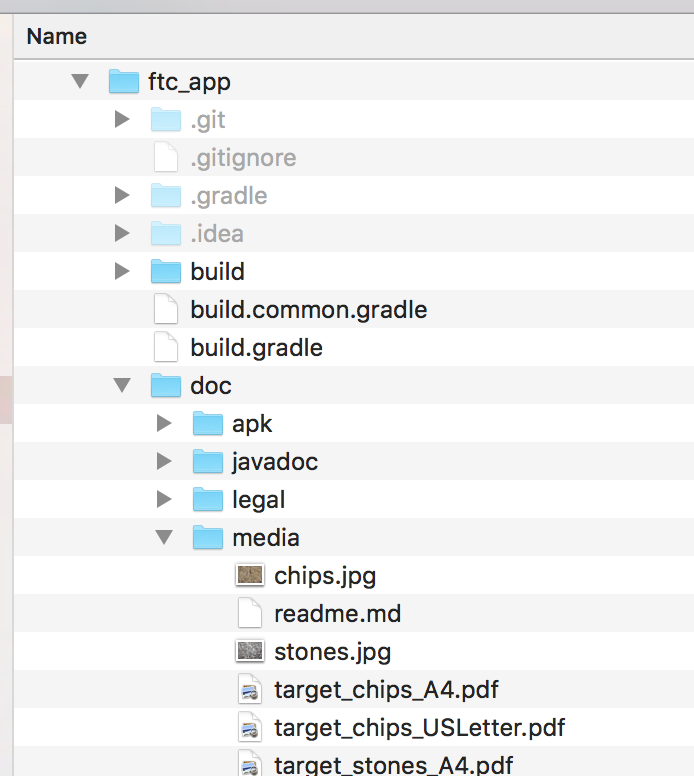

There are images you can use to test this located in the FTC App SDK you’ve downloaded. Open up Explorer (or Finder on Mac) and find the directory /ftc_app/docs/media. In here there will be two images, stones.jpg and chips.jpg. For now, just choose to open one of them on your computer screen (we’ll save some paper and won’t print these).

There are images you can use to test this located in the FTC App SDK you’ve downloaded. Open up Explorer (or Finder on Mac) and find the directory /ftc_app/docs/media. In here there will be two images, stones.jpg and chips.jpg. For now, just choose to open one of them on your computer screen (we’ll save some paper and won’t print these).Now the fun part. Turn your camera so it points to the image on your screen, and if you look on the robot controller phone screen, you’ll see the image with a superimposed blue,red and yellow axis. The phone recognizes the image and displays the X,Y,Z axis over it.

Look at the telemetry data on your driver station, and you should see one of the targets is visible, along with telemetry data about the camera position in relation to the image, and “the field”.

The SDK comes with a target database that contains the real Vortex images, so you don’t need to train the app to recognize those. There are tutorials on You Tube if you want to train it to detect other images or objects and the Vuforia website helps you do this as well. Just check out their Target Manager section and the tutorial on Model Targets

Good luck!